Divide and Conquer: Parallel Computing with Goroutines in Golang

You can find the Chinese version at 分而治之 - 在 Golang 使用 Goroutine 進行平行運算

In Golang, starting a goroutine is very simple; you just need one line: go task(). This makes it easy to write concurrent programs. This article will explain how to use goroutines for parallel computing to maximize CPU performance.

What is "parallel computing"?

According to Wikipedia:

Parallel computing is a type of computation in which many calculations or processes are carried out simultaneously. Large problems can often be divided into smaller ones, which can then be solved at the same time.

In simple terms, it means taking a big task and breaking it into smaller tasks, then handling them in parallel.

Today's big task: adding up the numbers in a slice.

You might think, "What's so hard about summing up numbers in a slice? Even my grandma could do that." So, you quickly write a Sum function, running a loop to add up all the numbers.

func Sum(numbers []int) int {

total := 0

for _, n := range numbers {

total += n

}

return total

}

In the test, it took only 500,000 nanoseconds to sum up one million numbers, which is very fast.

Note: One second equals one billion nanoseconds

> go test -bench=^BenchmarkSum$

goos: darwin

goarch: amd64

BenchmarkSum-8 3000 502660 ns/op

However, because the Sum function above runs on a single thread, it only uses one CPU. If your computer has multiple CPUs, you could find a way to make them work together.

Dual-Core Improved Version

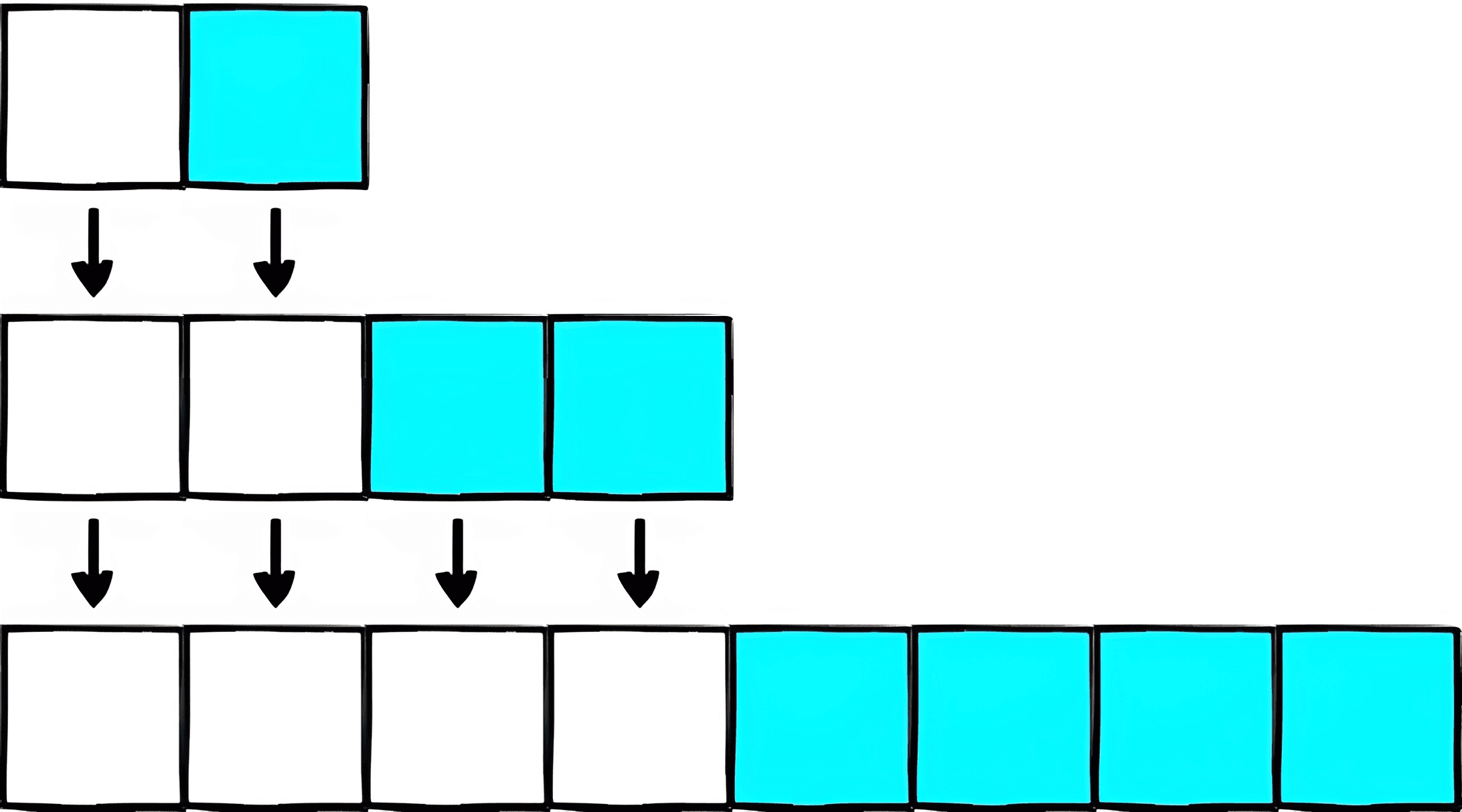

For example, if we can split a slice into two halves, let cpu1 add up the left half and cpu2 add up the right half. Then, combine their sums. This should be faster, right?

Following this idea, the code gives the left and right halves to different goroutines to sum up, then uses a channel to add the results together.

func SumTwoParallel(numbers []int) int {

mid := len(numbers) / 2

ch := make(chan int)

go func() { ch <- Sum(numbers[:mid]) }()

go func() { ch <- Sum(numbers[mid:]) }()

total := <-ch + <-ch

return total

}

The benchmark shows it only took 300,000 nanoseconds this time. it's much faster.

> go test -bench=^BenchmarkSumTwoParallel$

goos: darwin

goarch: amd64

BenchmarkSumTwoParallel-8 5000 300351 ns/op

Multi-Core General Version

The "Dual-Core Improved Version" splits the slice into left and right halves, but with more CPUs, we can split it into more parts.

First, use runtime.NumCPU() to get the number of CPUs. Then, split the slice into N parts, where N is the number of CPUs, and let different goroutines sum each part. Finally, combine all the results into a total. This way, the task is evenly distributed no matter how many CPUs the computer has.

func SumMaxParallel(numbers []int) int {

nCPU := runtime.NumCPU()

nNum := len(numbers)

ch := make(chan int)

for i := 0; i < nCPU; i++ {

from := i * nNum / nCPU

to := (i + 1) * nNum / nCPU

go func() { ch <- Sum(numbers[from:to]) }()

}

total := 0

for i := 0; i < nCPU; i++ {

total += <-ch

}

return total

}

On my 8-core laptop, it took about 160,000 nanoseconds, which is 3x faster compared to the initial 500,000 nanoseconds.

> go test -bench=^BenchmarkSumMaxParallel$

goos: darwin

goarch: amd64

BenchmarkSumMaxParallel-8 10000 163897 ns/op

Why is it only three times faster?

You might think, "For an eight-core computer, splitting the task into eight parts and processing them in parallel should be nearly eight times faster. Why is it only three times faster?"

I was also puzzled by this, so I traced the execution of each goroutine and found two main reasons:

Long wait time for threads to start

As shown in the picture below, the program starts with only one main thread. When creating eight goroutines, the system needs some time to start seven more threads. That means it can't start summing immediately with go func(){…}(), causing some delay.

But this isn't the fault of goroutines, as they start quickly. If there were a thread pool with pre-started threads, this issue could be solved.

The summing task is too simple

The delay in thread start-up alone shouldn't cause a significant slowdown. The task itself (slice summation) is very simple and runs quickly. So, even a slight delay in thread start-up leads to a big difference in benchmark results.

In the picture below, goroutine 15 took about 350,000 nanoseconds in total, with 230,000 nanoseconds waiting to be scheduled. About two-thirds of the time was spent waiting, making the result slow.

If the task were very complex and took a long time to execute, the delay in thread start-up would be relatively smaller and the benchmark results more valuable.

Summary

This example shows how to split a big task for parallel processing on multiple CPUs. Parallel computing can be used in many areas like merge sort, matrix multiplication, and map reduce. Even though this example is simple, the concept is the same.

When dealing with complex tasks, think about whether you can break them down into smaller tasks to handle separately.

All the code from this article is on the GitHub repo. Feel free to clone it to your computer and run the benchmark.